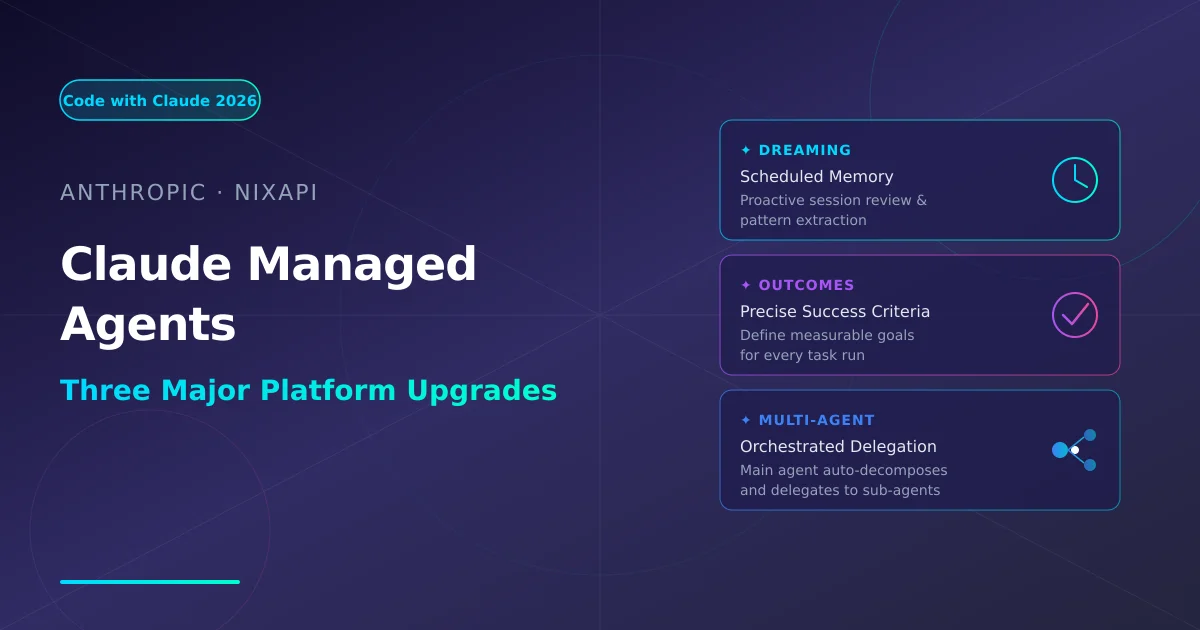

Anthropic Claude Managed Agents: Dreaming, Outcomes & Multi-agent Orchestration Explained

Anthropic's Code with Claude大会 2026 unveiled three major Claude Managed Agents upgrades: Dreaming for proactive memory, Outcomes for precise task evaluation, and Multi-agent Orchestration for automatic task delegation. This article covers the technical internals, API integration patterns, and what they mean for AI Agent developers.

Note: All facts from Anthropic’s official release page (anthropic.com/news/claude-code) and Ars Technica / 9to5Mac May 2026 reports. No undisclosed information.

1. What happened

At the Code with Claude conference (May 6–7, 2026), Anthropic announced three major platform updates for Claude Managed Agents:

| Update | Core Functionality | Problem Solved |

|---|---|---|

| Dreaming | Scheduled session review, proactively extracting patterns into memory | Agents get smarter over time, but couldn’t learn主动学习 |

| Outcomes | Developers define precise, quantifiable task success criteria | No more fuzzy evaluation — automated pass/fail with dimensions |

| Multi-agent Orchestration | Main Agent decomposes tasks and delegates to specialized sub-agents | Single-agent capability ceiling; multi-agent is the future |

Bonus: Claude Code Pro/Max usage limits doubled, Opus model API rate limits increased.

2. Dreaming: Agents That “Dream”

The Traditional Memory Problem

主流 Agent 的记忆机制是被动的:

- User says something → stored in context window

- Session ends → memory vanishes (or relies on external vector DB)

- Next session → starts from scratch, can’t leverage historical patterns

How Dreaming Works

Dreaming is not literally dreaming — it’s a scheduled batch-processing memory integration mechanism:

┌──────────────────────────────────────┐

│ User works with Agent (N sessions) │

└─────────────────┬────────────────────┘

↓

┌──────────────────────────────────────┐

│ Dreaming Trigger (configurable) │

│ - Extract high-frequency task patterns│

│ - Identify user preferences │

│ - Find common error patterns │

│ - Write to long-term vector store │

└─────────────────┬────────────────────┘

↓

┌──────────────────────────────────────┐

│ Next session: Agent loads rich memory│

│ "Last time user preferred Y for X" │

└──────────────────────────────────────┘

API Integration

// Dreaming configuration example

const agent = await client.beta.managedagents.sessions.create({

model: 'claude-opus-4-7',

dreaming: {

enabled: true,

// Triggers every 24h or after 50 interactions

triggerInterval: '24h',

triggerInteractions: 50,

memoryStore: {

type: 'vector',

// Works with Pinecone / Weaviate / Atlas Vector Search

endpoint: process.env.VECTOR_STORE_URL,

index: 'claude-agent-memories',

},

},

});

⚠️ Dreaming is in Beta; access requires application via Anthropic Console.

3. Outcomes: Precise Task Success Criteria

Why Outcomes Matters

Traditional evaluation is manual or rule-based:

Traditional:

if (response.includes('success')) → success ❌ Easily gamed by prompt injection

if (hasAttachment()) → complete ❌ High false positive rate

Outcomes lets developers define code-driven, quantifiable success criteria:

// Outcomes definition example

const outcomes = [

{

name: 'code-compiles',

description: 'Generated code must pass TypeScript compilation',

evaluation: async ({ output, context }) => {

const tsResult = await compileTypescript(output.generatedCode);

return tsResult.success && tsResult.errorCount === 0;

},

weight: 2.0, // Higher weight

},

{

name: 'has-tests',

description: 'Must include at least 3 unit tests',

evaluation: async ({ output }) => {

const testCount = extractTestCases(output.generatedCode);

return testCount >= 3;

},

weight: 1.0,

},

{

name: 'no-security-hotspots',

description: 'No hardcoded passwords or API keys',

evaluation: async ({ output }) => {

return !containsCredentials(output.generatedCode);

},

weight: 1.5,

},

];

const run = await client.beta.managedagents.runs.create({

sessionId: agent.sessionId,

task: 'Implement a user authentication middleware',

outcomes,

});

const result = await client.beta.managedagents.runs.wait(run.id);

console.log(result.outcomeScores);

// { 'code-compiles': true, 'has-tests': true, 'no-security-hotspots': true }

Real-world Value

- Automated evaluation: No human review needed, CI/CD pipeline ready

- Multi-dimensional scoring: Weighted dimensions, not binary Pass/Fail

- Traceable: Full score history per run — enables A/B model comparison

4. Multi-agent Orchestration

When to Use It

When a single Agent faces complex tasks, manually orchestrating workflows is tedious. Multi-agent Orchestration lets the main Agent automatically decide what to delegate:

// Main Agent setup

const orchestrator = await client.beta.managedagents.orchestration.create({

model: 'claude-opus-4-7',

agents: [

{

id: 'code-writer',

role: 'Code Generation Expert',

systemPrompt: 'You specialize in writing high-quality code from requirements...',

capabilities: ['code-generation', 'refactoring'],

},

{

id: 'test-engineer',

role: 'Test Engineer',

systemPrompt: 'You specialize in writing comprehensive unit and integration tests...',

capabilities: ['test-generation', 'coverage-analysis'],

},

{

id: 'security-reviewer',

role: 'Security Reviewer',

systemPrompt: 'You specialize in finding security vulnerabilities in code...',

capabilities: ['security-analysis', 'vulnerability-detection'],

},

],

routingPolicy: 'automatic', // Main Agent decides automatically

maxDelegations: 5, // Prevent infinite loops

});

// One prompt, main Agent auto-decomposes and delegates

const result = await orchestrator.run({

task: 'Implement JWT auth middleware with full tests and security review',

});

vs. Traditional Multi-agent Frameworks (LangGraph, etc.)

| Feature | Traditional Multi-agent (LangGraph etc.) | Claude Orchestration |

|---|---|---|

| Routing logic | Manual by developer | Main Agent auto-decides |

| Memory sharing | Sub-agents independent | Unified context, injected on demand |

| Error handling | Manual implementation | Auto-retry + Outcome evaluation |

| Integration cost | High (build your own infra) | One API call |

5. Usage Limit Updates

| Plan | Change |

|---|---|

| Claude Code Pro | Monthly Max Turns doubled (500 → 1,000) |

| Claude Code Max | Monthly high-speed runtime doubled |

| Opus Model API | Rate limit increased, higher concurrency supported |

6. NixAPI Integration Path

// Access Claude Managed Agents via NixAPI

import { NixAPI } from '@nixapi/client';

const client = new NixAPI({

apiKey: process.env.NIXAPI_KEY,

provider: 'anthropic',

});

const agent = await client.managedAgents.sessions.create({

model: 'claude-opus-4-7',

dreaming: {

enabled: true,

triggerInterval: '24h',

},

});

// Outcome-driven task execution

const result = await client.managedAgents.runs.create({

sessionId: agent.id,

task: 'Write complete unit tests for the payment module',

outcomes: ['code-compiles', 'has-tests', 'no-security-hotspots'],

});

console.log(result.outcomeScores);

NixAPI supports the full Claude Managed Agents API suite — including Dreaming, Outcomes, and Multi-agent Orchestration.

7. Key Takeaways

| Capability | Best For | Current Status |

|---|---|---|

| Dreaming | Long-running personal assistants, enterprise support bots, data analysis Agents | Beta, application required |

| Outcomes | CI/CD integration, automated evaluation, production Agent reliability | Beta, application required |

| Multi-agent Orchestration | Complex task decomposition, high-concurrency Agent systems | Beta, application required |

All three point toward the same direction: transforming Agents from “single-shot tools” into “learnable, evaluable, collaborative intelligence”.

For NixAPI users, these capabilities unlock more powerful multi-model routing scenarios — Dreaming makes routing policies smarter over time, Outcomes provides data-driven model selection, and Multi-agent enables complex task decomposition to the optimal model.

Developers should apply for Beta access now and begin experimenting in controlled environments.

Try NixAPI Now

Reliable LLM API relay for OpenAI, Claude, Gemini, DeepSeek, Qwen, and Grok with ¥1 = $1 top-up

Sign Up Free